Diplomatic Documents and Swiss Newspapers in 1914

This project gathers two data sets: Diplomatic Documents of Switzerland and Le Temps Historical Archive for year 1914. Our aim is to find links between the two data sets to allow users to more easily search the corpora together. The project is composed by two parts:

- The Geographical Browser of the corpora. We extract all places from Dodis metadata and all places mentioned in each article of Le Temps, we then match documents and articles that refer to the same places and visualise them on a map for geographical browsing.

- The Text similarity search of the corpora. We train two models on the Le Temps corpus: Term Frequency Inverse Document Frequency and Latent Semantic Indexing with 25 topics. We then develop a web interface for text similarity search over the corpus and test it with Dodis summaries and full text documents.

Data and source code

- Data.zip (CC BY 4.0) (DropBox)

Documentation

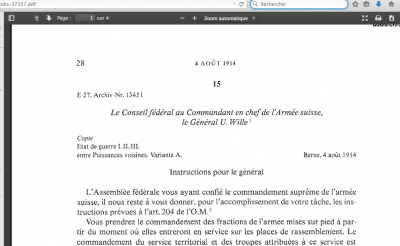

In this project, we want to connect newspaper articles from Journal de Genève (a Genevan daily newspaper) and the Gazette de Lausanne to a sample of the Diplomatic Documents in Switzerland database (Dodis). The goal is to conduct requests in the Dodis descriptive metadata to look for what appears in a given interval of time in the press by comparing occurrences from both data sets. Thus, we should be able to examine if and how the written press reflected what was happening at the diplomatic level. The time interval for this project is the summer of 1914.

In this context, at first we cleaned the data, for example by removing noise caused by short strings of characters and stopwords. The cleansing is a necessary step to reduce noise in the corpus. We then compared prepared tfidf vectors of words and LSI topics and represented each article in the corpus as such. Finally, we indexed the whole corpus of Le Temps to prepare it for similarity queries. THe last step was to build an interface to search the corpus by entering a text (e.g., a Dodis summary).

Difficulties were not only technical. For example, the data are massive: we started doing this work on fifteen days, then on three months. Moreover, some Dodis documents were classified (i.e. non public) at the time, therefore some of the decisions don't appear in the newspapers articles. We also used the TXM software, a platform for lexicometry and text statistical analysis, to explore both text corpora (the DODIS metadata and the newspapers) and to detect frequencies of significant words and their presence in both corpora.